Assessment, Evaluation, and Research

The Assessment, Evaluation, and Research competency area (AER) focuses on the ability to use, design, conduct, and critique qualitative and quantitative AER analyses; to manage organizations using AER processes and the results obtained from them; and to shape the political and ethical climate surrounding AER processes and uses on campus. [1]

In my graduate school experience, I have had several opportunities to use, design, and conduct various forms of research, assessment, and evaluations. In my first-year internship with the Explorations/Study Abroad office at Baldwin-Wallace College, there were several opportunities to engage in research and benchmarking for the office. One such endeavor of benchmarking included researching the number of students sent abroad per year, the student-to-staff ratio of faculty-led programs, and the total number of professional staff members of peer institutions' study abroad offices and operations. By contacting peer institutions and compiling the data, I was able to effectively quantify the differences between Baldwin-Wallace (B-W) and her peer institutions.

The next step, then, was to assess and analyze the data according to the mission and vision of the B-W Explorations/Study Abroad office, as well as the institution as a whole. I found that most peer institutions were currently sending fewer students abroad with more professional staff in their study abroad offices. With B-W's desire for every student to engage in some form of experiential learning (including studying abroad, volunteering, or completing an internship), this benchmarking exercise was used to lobby for the promotion of a part-time study abroad staff member in the office to full-time, thus providing greater resources to interested, current, and returned study abroad students.

Through this process, I incorporated the use of professional literature to support the data that had been collected, using publications from professional organizations devoted to international education. One of the greatest challenges of this process was using those resources, paired with the data I collected, and interpreting the results in a message that would be effective and understandable to study abroad practitioners, senior academic and student affairs officers, and potentially institutional presidents. The task, then, required succinct writing, ensuring that the mission and outcomes of the office were in fact congruent with those of the institution, and that the office helped to accomplish the mission and intended outcomes of the institution.

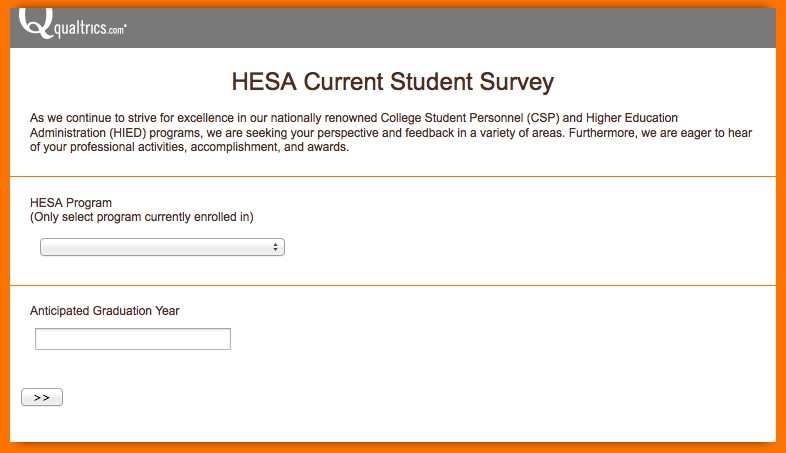

The second activity that helped me accomplish exemplary levels of competency in assessment has been in creating and preparing to distribute a survey using the mission, vision, and values of the College Student Personnel and Higher Education Administration programs. This survey is designed to assess the professional and personal involvement of current students in each program, along with the development along the ACPA/NASPA professional competencies during their duration in the program.

One of the intriguing aspects of this assessment is that it has also been designed to correlate with the data needed for a program review, allowing for greater incorporation and significance of the data collected. Another intriguing aspect of this assessment was using up-to-date technology to create and distribute the survey, along with up-to-date technology for data collection and analysis. By using these electronic resources, it became easier to seek consultation regarding the survey and instruments used.

Ultimately, though the survey has yet to be distributed, using these assessment and evaluation methods in creating the survey have provided me with opportunities to learn what appropriate language and vocabulary to use in ensuring that the collected results are congruent with the intended results. Both activities, though, have reinforced both the need for clear, enacted missions and values, as well as the need in contemporary higher education to assess the achievement of those missions and values by the department, division, and institution as a whole.

The next step, then, was to assess and analyze the data according to the mission and vision of the B-W Explorations/Study Abroad office, as well as the institution as a whole. I found that most peer institutions were currently sending fewer students abroad with more professional staff in their study abroad offices. With B-W's desire for every student to engage in some form of experiential learning (including studying abroad, volunteering, or completing an internship), this benchmarking exercise was used to lobby for the promotion of a part-time study abroad staff member in the office to full-time, thus providing greater resources to interested, current, and returned study abroad students.

Through this process, I incorporated the use of professional literature to support the data that had been collected, using publications from professional organizations devoted to international education. One of the greatest challenges of this process was using those resources, paired with the data I collected, and interpreting the results in a message that would be effective and understandable to study abroad practitioners, senior academic and student affairs officers, and potentially institutional presidents. The task, then, required succinct writing, ensuring that the mission and outcomes of the office were in fact congruent with those of the institution, and that the office helped to accomplish the mission and intended outcomes of the institution.

The second activity that helped me accomplish exemplary levels of competency in assessment has been in creating and preparing to distribute a survey using the mission, vision, and values of the College Student Personnel and Higher Education Administration programs. This survey is designed to assess the professional and personal involvement of current students in each program, along with the development along the ACPA/NASPA professional competencies during their duration in the program.

One of the intriguing aspects of this assessment is that it has also been designed to correlate with the data needed for a program review, allowing for greater incorporation and significance of the data collected. Another intriguing aspect of this assessment was using up-to-date technology to create and distribute the survey, along with up-to-date technology for data collection and analysis. By using these electronic resources, it became easier to seek consultation regarding the survey and instruments used.

Ultimately, though the survey has yet to be distributed, using these assessment and evaluation methods in creating the survey have provided me with opportunities to learn what appropriate language and vocabulary to use in ensuring that the collected results are congruent with the intended results. Both activities, though, have reinforced both the need for clear, enacted missions and values, as well as the need in contemporary higher education to assess the achievement of those missions and values by the department, division, and institution as a whole.

Basic

One should be able to:

|

Intermediate

One should be able to:

|

Advanced

One should be able to:

|